Measurements affect behavior. Wrong behavior results when metrics are confusing or do not represent what is truly happening. Leaders of many respected companies are paying the price for creating an environment in which measurements did not reflect accurately what was occurring in their organization.

In contrast, the wise selection of metrics and their tracking within an overall business system can lead to activities that result in moving toward achieving the three Rs of business: everybody doing the right things, doing them right and doing them at the right time.

Measurement issues can be prevalent at all levels of an organization. To add to this dilemma, the basic calculation and presentation of metrics can sometimes be deceiving. Organizations often state that suppliers must meet process capability objectives, typically measured in Cp, Cpk, Pp and Ppk. The requesters of these objectives often do not realize, however, that these reported numbers can be highly dependent upon how data is collected and interpreted. Also, these process capability metrics typically are utilized only at a component part level. To resolve these issues, practitioners need a common, easy-to-use fundamental measurement for making process stability and capability assessments at all levels of a business, independent of who is making the assessment – something beyond Cp, Cpk, Pp and Ppk.

The process capability index Cp represents the allowable tolerance interval spread in relation to the actual spread of the data when the data follows a normal distribution. The equation to calculate this index is:

Measurement issues can be prevalent at all levels of an organization. To add to this dilemma, the basic calculation and presentation of metrics can sometimes be deceiving. Organizations often state that suppliers must meet process capability objectives, typically measured in Cp, Cpk, Pp and Ppk. The requesters of these objectives often do not realize, however, that these reported numbers can be highly dependent upon how data is collected and interpreted. Also, these process capability metrics typically are utilized only at a component part level. To resolve these issues, practitioners need a common, easy-to-use fundamental measurement for making process stability and capability assessments at all levels of a business, independent of who is making the assessment – something beyond Cp, Cpk, Pp and Ppk.

Calculating Process Capability and Performance Indices

The process capability index Cp represents the allowable tolerance interval spread in relation to the actual spread of the data when the data follows a normal distribution. The equation to calculate this index is:

, where

USL and LSL are the upper specification limit and lower specification limit, respectively, and 6s describes the range or spread of the process. Data centering is not taken into account in this equation.

Cpaddresses only the spread of the process; Cpk is used concurrently to consider the spread and mean shift of the process. Mathematically, Cpk can be represented as the minimum value of the two quantities, as shown in this formula:

The relationship between Pp and Ppk is similar to that between Cp and Cpk. Differences in the magnitudes of indices are from differences in standard deviation (s) values. Cp and Cpk are determined from short-term standard deviation, while Pp and Ppk are determined using long-term standard deviation. Sometimes the relationship between Pp and Ppk is referred to as process performance.

Although standard deviation is an integral part of the calculation of process capability, the method used to calculate it is rarely and adequately scrutinized. It can be impossible to determine a desired metric if data is not collected in the appropriate fashion. Consider the following three sources of continuous data:

◈ Situation 1: Small groups of data are taken periodically and could be tracked in a time series using an x-bar and R control chart.

◈ Situation 2: Single data points are taken periodically and could be tracked in a times series using an individuals (X) chart.

◈ Situation 3: Random data is taken from a set of completed transactions or products where there is no time dependence.

All three of the above situations are possible sources of information, each with its own approach for determining standard deviation in the process capability and performance equations. The figure below illustrates the mechanics of these and three additional approaches for this calculation. Methods 2 to 5 require practitioners to maintain time order, while 1 and 6 do not.

Various Ways to Calculate Process Standard Deviation (Source: Integrated Enterprise Excellence Volume III – Improvement Project Execution: A Management and Black Belt Guide for Going Beyond Lean Six Sigma and the Balanced Scorecard [Bridgeway Books,2008])

Practitioners need to be careful about the methods they use to calculate and report process capability and performance indices. A customer may ask for Cp and Cpk metrics when the documentation may really stipulate the use of a long-term estimate for standard deviation. Using Cp and Cpk values, which account for short-term variability, could yield a very different conclusion about how a product is performing relative to customer needs. A misunderstanding like this between customer and supplier could be costly.

Common Issues with Process Capability and Performance Indices

There are a number of prevalent process capability and performance indices issues. For instance, capability reports must be accompanied by a statistical control chart that demonstrates the stability of the process. If this is not done and the process shifted, for example, the index would have been determined for two processes, where, in reality, only one of the two can exist at a time. Also, if the source data is skewed and not normally distributed, there may be significant out-of-specification conditions with Cpk and Ppk values greater than predicted. Other issues include:

◈ When an x-bar and R process control chart is not in control, calculated short-term standard deviations typically are significantly smaller than the long-term standard deviations, which results in Cp and Cpk indices that make a process performance appear better than reality.

◈ Different types of control charts for a given process can provide differing perspectives relative to stability. For example, an x-bar and R control chart can appear to be out of control due to regular day-to-day variability effects such as raw material, while a daily subgrouping individuals control chart could indicate the process is stable.

◈ Determined values for capability and performance indices are more than a function of chance; they depend on how someone chooses to sample from a process. For example, if a practitioner were to choose a daily subgrouping of five sequentially produced parts and determine process capability, they could get a much smaller Cp and Cpk value than someone who had a daily subgrouping of one.

◈ The equations above for determining these indices assume normality and that a specification exists, which is often not the case.

Changing the Approach

One alternative to the capability index is reporting the performance for stable processes as a predicted long-term nonconformance percentage out of tolerance or PPM defective rate. This alternative is not dependent on any condition except for long-term predictability. If no tolerance exists, a recommended capability metric is a median and 80 percent frequency of occurrence range from a long-term predictable process. This methodology allows for the assessment of non-normal data capability, such as found in time and many other process metrics.

These measurement objectives are accomplished when utilizing the following scorecard process:

1. Assess process predictability (i.e., whether it is in statistical control)

2. When the process is considered predictable, formulate a prediction statement for the latest region of stability. The usual reporting format for this prediction statement is the following:

a. Nonconformance percentage or defects per million opportunities (DPMO) when there is a specification requirement

b. Median response and 80 percent frequency of occurrence rate when there is no specification requirement

With this approach, day-to-day raw material variability is considered as a noise variable to the overall process. In other words, raw material would be considered a potential source of common cause variability. Because of this, practitioners need a measurement strategy that considers the between-day variability when establishing control limits for a control chart. This does not occur with a traditional x-bar and R control chart because within-sub-grouping variability mathematically determines the control limits.

Benefits of Zooming Out

Practitioners track the process using an individuals control chart that has an infrequent sampling plan, in which the typical noise variation of the process occurs between samples. It should be noted that the purpose of this 30,000-foot-level metric reporting is not to give timely control to a process or insight into what may be causing unsatisfactory process capability. The only intent of the metric is to provide an operational picture of what the customer of the process experiences and to separate common cause from special cause variability.

For continuous data, whenever a process is within the control limits and there are no trends, the stable process typically is said to be in control, or stable. For processes that have a recent region of stability, it is possible to say that the process is predictable. Data from this region of predictability can be considered a random sample of the future.

A probability plot is a powerful approach for providing the process prediction statement. For this high-level process view, practitioners can quantify the capability of the process in a form that everyone understands. If no specification exists, a best-estimate 80 percent frequency of occurrence band could be reported, along with a median estimate.

When specifications or nonconformance regions do exist, practitioners can determine the capability of the process in some form of proportion units beyond the criteria, such as PPM, DPMO or percent nonconformance, along with a related cost of poor quality (COPQ) or the cost of doing nothing differently (CODND) monetary impact. The COPQ or CODND metric, along with any customer satisfaction issues, can then be assessed to determine whether improvement efforts are warranted.

Transformations may be appropriate for both assessing process predictability and making a prediction statement; only transformations that make physical sense for a given situation should be used.

Also, this 30,000-foot-level metric reporting structure can be used throughout an enterprise as an organization performance metric, which may keep practitioners from firefighting common cause variability as though it were special cause. A similar approach also would apply to the tracking of attribute data, allowing for a single unit of measurement for both attribute and continuous data situations within manufacturing, development and transactional processes.

Switching Methods

Most people find it easy to visualize and interpret an estimated PPM rate beyond customer objectives as a reported process capability or performance index. Because of this, organizations should consider using the metric described here with appropriate data transformations, in lieu of Cp, Cpk, Pp and Ppk and other process metrics, whenever possible. When a customer asks for these, a supplier could provide the calculated indices along with a control chart that shows predictability, while highlighting the predicted nonconformance proportion.

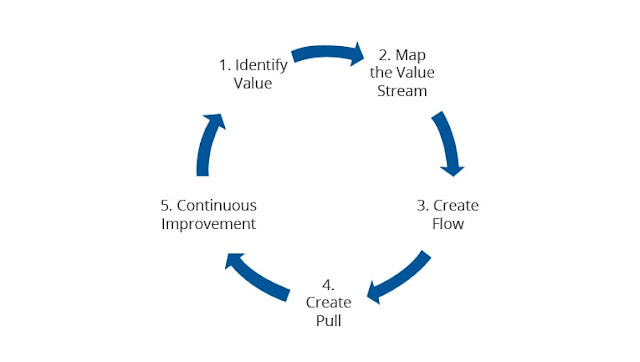

A Lean Six Sigma deployment needs a sound infrastructure for the selection of projects, which should be linked to the goals and metrics of the overall business. A high-level individuals control chart can be a useful performance metric not only for projects, but also for value-chain performance metrics. This metric, coupled with an effective project execution roadmap, can lead to significant bottom-line benefits and improved customer satisfaction for an organization.